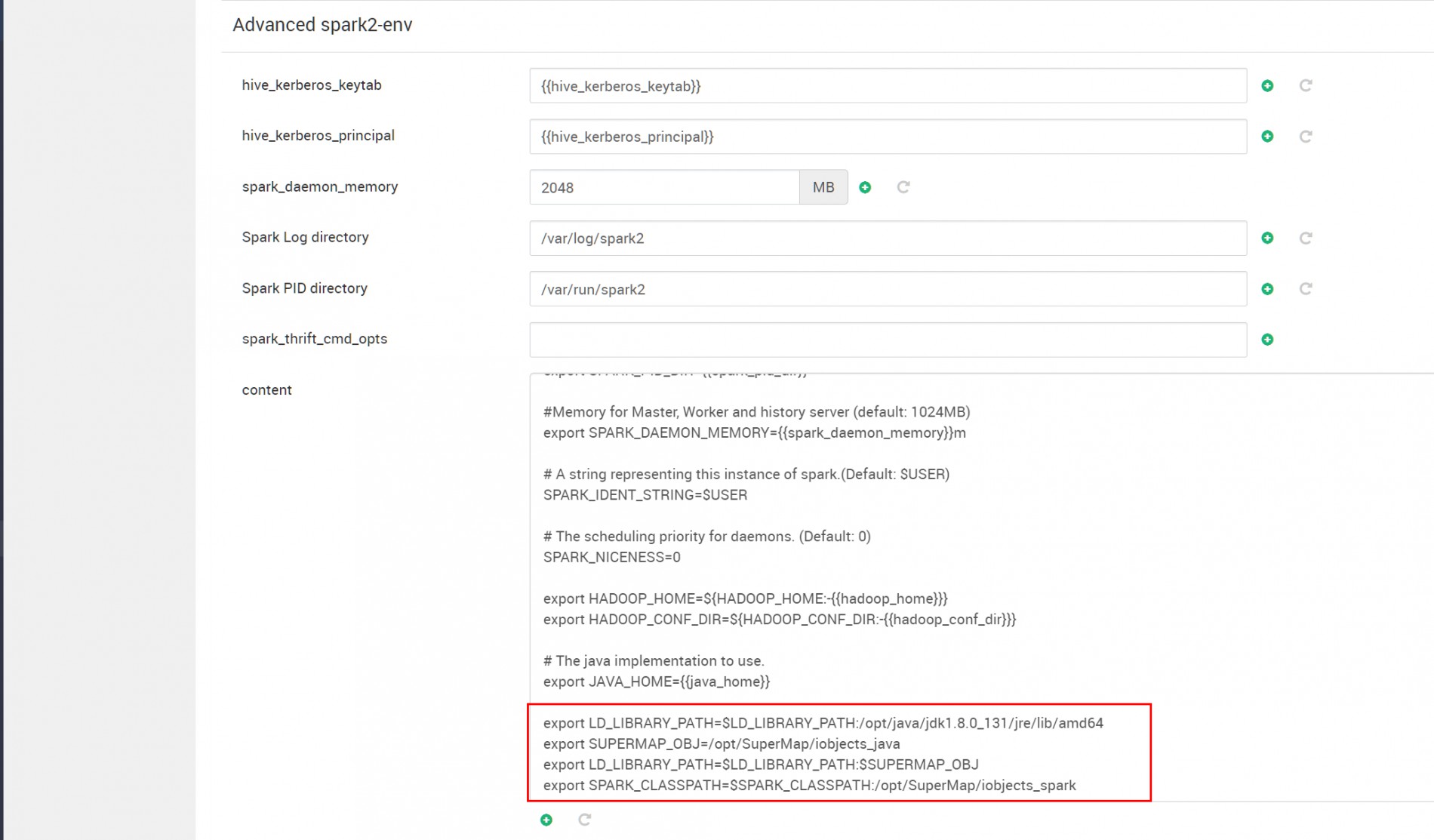

已经参照贵司工作人员给的部署文档成功部署过Apache的Hadoop集群集成iObjects for Spark,使用的版本是10.0.1。使用iObjects for Spark API编写的程序可以成功在Apache的Hadoop集群部署运行,但是我司使用的是ambari集群,版本为2.7.3.0,参照着文档在ambari集群进行配置的修改,程序可以使用local模式运行,但是yarn模式下运行失败,许可相关的错误。spark-env.sh的配置添加内容如下

运行报错日志如下

20/11/04 18:44:22 WARN PostGISFeatureRDDProvider: Error occurred creating table

20/11/04 18:44:22 INFO License: lichasp.connect return exception

java.lang.UnsatisfiedLinkError: Aladdin.Hasp.Login(JLjava/lang/String;[I)I

at Aladdin.Hasp.Login(Native Method)

at Aladdin.Hasp.login(Hasp.java:91)

at com.supermap.LicenseHaspServiceImpl.internalLogin(LicenseHaspServiceImpl.java:203)

at com.supermap.LicenseHaspServiceImpl.connect(LicenseHaspServiceImpl.java:135)

at com.supermap.License.connect(License.java:309)

at com.supermap.License.connect(License.java:276)

at com.supermap.bdt.license.iObjectsLicense$$anonfun$checkiServerLicense$3.apply(iObjectsLicense.scala:92)

at com.supermap.bdt.license.iObjectsLicense$$anonfun$checkiServerLicense$3.apply(iObjectsLicense.scala:89)

at scala.collection.TraversableLike$WithFilter$$anonfun$foreach$1.apply(TraversableLike.scala:733)

at scala.collection.Iterator$class.foreach(Iterator.scala:893)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1336)

at scala.collection.IterableLike$class.foreach(IterableLike.scala:72)

at scala.collection.AbstractIterable.foreach(Iterable.scala:54)

at scala.collection.TraversableLike$WithFilter.foreach(TraversableLike.scala:732)

at com.supermap.bdt.license.iObjectsLicense$.checkiServerLicense(iObjectsLicense.scala:89)

at com.supermap.bdt.license.iObjectsLicense$.checkCoreLicense(iObjectsLicense.scala:53)

at com.supermap.bdt.license.BDTLicense$.checkCoreLicense(BDTLicense.scala:59)

at com.supermap.bdt.rddprovider.jdbc.PostGISFeatureRDDProvider.append(PostGISFeatureRDDProvider.scala:96)

at com.supermap.bdt.FeatureRDDProvider$class.save(FeatureRDDProvider.scala:74)

at com.supermap.bdt.rddprovider.jdbc.PostGISFeatureRDDProvider.save(PostGISFeatureRDDProvider.scala:25)

at com.cloudoforce.spark.app.analysis.FollowAnalysisApp$.getUserDefinedCode(FollowAnalysisApp.scala:1100)

at com.cloudoforce.spark.app.analysis.FollowAnalysisApp$.main(FollowAnalysisApp.scala:90)

at com.cloudoforce.spark.app.analysis.FollowAnalysisApp.main(FollowAnalysisApp.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.yarn.ApplicationMaster$$anon$4.run(ApplicationMaster.scala:721)

热门文章

热门文章

热门文章

热门文章